UPDATE: November 25, 2022

On November 25, 2022, the Indian Department of Telecommunications (“DoT”) – named Respondent No. 2 in the World Phone action, filed a short affidavit providing documentation of DoT’s request that the Telecom Regulatory Authority of India [“TRAI”] revisit its laissez faire decision regarding OTT operators such as Facebook and WhatsApp.

UPDATE: September 9, 2022

On September 8, 2022, the Court ruled that the Indian Department of Telecommunications (“DoT”) – named Respondent No. 2 in the World Phone action, was entitled to file an affidavit “placing relevant material on the record” regarding the issues “pertaining to internet telephony [that] have upon due consideration been remitted back to the Telecom Regulatory Authority of India [“TRAI”] for its consideration and opinion.” Legal counsel for Meta Platforms, Inc. (“Meta”) also suggested at the September 8, 2022 hearing that the rejection of TRAI’s recommendations did not necessarily mean Facebook and WhatsApp violated Indian regulatory law.

The September 8, 2022 hearing and ruling is significant for several reasons. First, it is the first official confirmation that the DoT did in fact reject TRAI’s September 2020 laissez faire recommendations. In 2020, TRAI did not recommend enforcement of DoT regulations on OTT operators such as Meta and WhatsApp. See “Regulatory Framework for Over-the-top (OTT) communication services” (14 September 2020). Without referencing the applicable laws or regulations, TRAI concluded on page 8 of its September 2020 recommendations: “It is not an opportune moment to recommend a comprehensive regulatory framework for various aspects of services referred to as OTT services, beyond the extant laws and regulations prescribed presently.” (emphasis added). On May 3, 2022, World Phone applauded the widely reported, yet previously unconfirmed DoT decision to reject TRAI’s 2020 recommendations. This unconfirmed press account has now officially been confirmed by Respondent No. 2 but only because of the September 8, 2022 hearing in the World Phone action.

Second, this decision has now placed Meta on the defensive in that the company will for the first time have to specifically address its lack of regulatory compliance. Armed with the DoT’s and Meta’s position prior to the next scheduled hearing on December 8, 2022, World Phone’s counsel will demonstrate once and for all the illegal nature of the Facebook and WhatsApp services. Only after that is done, will the Court finally rule on the matter.

All of this is very timely given that Google – a company like Microsoft that complies with the pertinent Indian regulations, has recently been at odds with Meta as regards the use of a government panel to oversee online content disputes. Meta wants no oversight from the Indian government. This is not a great surprise given that – as recognized by local press, companies like Facebook have “for years been at odds with the Indian government, arguing that strict regulations are hurting their business and investment plans.”

Meta dwarfs all other “Western tech giants” operating in India. No other company even comes close. Within a few months time, the High Court in New Delhi will hopefully finally put an end to Meta’s Digital Colonialism. This can only be considered good news for Indians who value their independence and right to self sovereignty.

UPDATE: March 19, 2022

On its own motion, the Court adjourned the March 16, 2022 hearing without taking evidence or hearing any arguments. The next hearing date was scheduled for September 8, 2022. To date, the DoT has ignored the December 6, 2021 Order and has not taken “a final decision on the [TRAI] recommendations.”

It appears as if the Court recognizes it must eventually rule against Facebook and WhatsApp but would prefer to delay the inevitable.

UPDATE: December 8, 2021

On December 6, 2021, Justice Rekha Palli closed the pleadings and ruled in favor of an adjournment request made by counsel for the Department of Telecommunications and Union of India. This was done so that the DoT could further evaluate the recommendation of TRAI filed in 2020.

Most importantly, the Court ruled that “it is expected that before the next date of hearing, the said respondents will take a final decision on the aforesaid recommendations. While doing so, it will also be open for them to consider whether any fresh recommendations are called for from the TRAI.” The next hearing is scheduled for March 16, 2022.

By not dismissing the action and instead moving the DoT away from the sidelines, Meta was dealt a blow that may very well lead to the end of its unlicensed activities in India. Even though it would have been nice to see that happen in 2021, given the strong political ties of Meta in India the old adage “better late than never” easily comes to mind.

On October 7, 2021, World Phone served on WhatsApp its response in a writ Petition filed by World Phone in India. World Phone previously filed its reply to the Facebook submission on August 25, 2021.

The World Phone Rejoinder provides a detailed analysis of why the Court should bar the use of WhatsApp until the company complies with applicable Indian law. To that end, it is anticipated that the Court will grant the requested injunctive relief on or about December 6, 2021 as to both Respondent No. 3 (Facebook) and Respondent No. 4 (WhatsApp).

Relevant sections of this filed Rejoinder are extracted below.

In 2015 – long before Respondents No. 3 and 4 solidified their current monopoly positions in India, TRAI already recognized Respondents No. 3 and No. 4 were providing the top two mobile phone applications used in India. See Consultation Paper on Regulatory Framework for Over-the-top (OTT) services, para 2.39 at page 27 (27 March 2015) (Publicly available at https://trai.gov.in/sites/default/files/OTT-CP-27032015.pdf).

It is submitted that private monopolistic entities directly impacting the public interest are always subject to writ petitions. Zee Telefilms Ltd. & Anr v. Union of India & Ors., (2005) 4 SCC 649, para 158 (“A body discharging public functions and exercising monopoly power would also be an authority and, thus, writ may also lie against it.”) [emphasis added]. Given the strong public interest implicated by this Petition and Respondent No. 4’s exertion of monopoly power, the Petitioner’s writ Petition should proceed against all Respondents – including Respondent No. 4.

The fact that the functionally equivalent Internet Telephony services of an Internet service provider (“ISP”) – an entity required to obtain a Unified License prior to providing such services, are provided by Respondent No. 4 un-hindered and without entering into a Unified License Agreement is well recognized and admitted by all Respondents. Such unlicensed activity is in violation of Section 5 of the Indian Wireless Telegraphy Act, 1933; Sections 4 and 20A of the Indian Telegraph Act, 1885; Section 79 of the Information Technology Act, 2000; and the entire framework of the Telecom Regulatory Authority of India Act, 1997.

It is submitted that all such services provided by Respondents No. 3 and No. 4 in India should be “licensed pursuant to an agreement with the Department of Telecommunications, Government of India (“DoT”)” notwithstanding, considering such services “internet-based ‘over-the-top’ (“OTT”) services”.

It is submitted that the Respondent No. 3 by its own averments states that it provides unlicensed Internet Telephony Service/VoIP Calls. Such Services are provided by the Petitioner by procuring a license from Respondent No. 2 and are governed by the Indian Wireless Telegraphy Act, 1933; the Indian Telegraph Act, 1885; the Information Technology Act, 2000; and the Telecom Regulatory Authority of India Act, 1997.

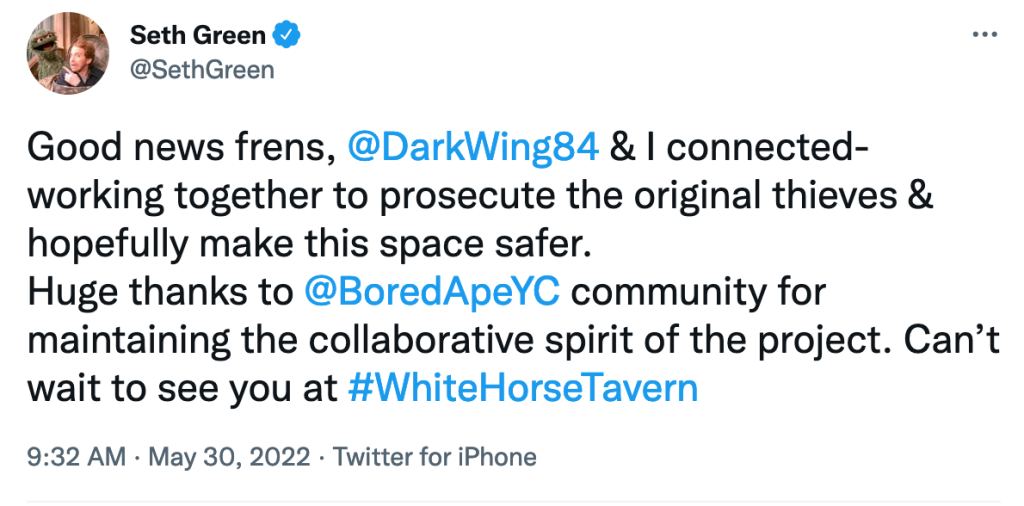

It is further submitted that this uneven application has allowed Respondents No. 3 and No. 4 to dominate the market completely and totally – also damaging and putting out of business other Internet Telephony service providers who were once viable. This market dominance has not gone unnoticed in the United States where an Amended Complaint was filed on 19 August 2021 by the US Federal Trade Commission.

Respondent No. 4 currently publicly opposes the enforcement of any interception rule. See “What is traceability and why does WhatsApp oppose it?” (Publicly available at https://faq.whatsapp.com/general/security-and-privacy/what-is-traceability-and-why-does-whatsapp-oppose-it) (“Some governments are seeking to force technology companies to find out who sent a particular message on private messaging services. This concept is called “traceability.” . . . WhatsApp is committed to doing all we can to protect the privacy of people’s personal messages, which is why we join others in opposing traceability.”) [emphasis added]. No matter what Respondent No. 4 does or does not do in this regard, it is submitted that the applicable Rules of interception of communication is dwarfed by the applicable financial commitments and vigorous checks and balances required under the Unified License Agreement and associated regulations which Respondent No. 4 should adhere to given the Internet Telephony/VoIP services it provides.

The Hon’ble Supreme Court has recognized that

“it can very well be said that a writ of mandamus can be issued against a private body which is not a State within the meaning of Article 12 of the Constitution and such body is amenable to the jurisdiction under Article 226 of the Constitution and the High Court under Article 226 of the Constitution can exercise judicial review of the action challenged by a party. But there must be a public law element and it cannot be exercised to enforce purely private contracts entered into between the parties.” Binny Ltd. v. V. Sadasivan, (2005) 6 SCC 657, para 32.

It is submitted that the issues raised in this writ Petition concern existing legislation governing the services provided by the Petitioner and the Respondents No. 3 and No. 4. Wherein the Petitioner is operating through the Unified License Agreement issued by Respondents No. 1 and No. 2, the Respondents No. 3 and No. 4 are providing the same services but circumventing the existing legislation and are completely unregulated/unlicensed. This injustice can only be ruled upon by a Constitutional Court under Article 226 of the Constitution by the Hon’ble High Court and under Article 32 of the Constitution by the Hon’ble Supreme Court of India and not by the TDSAT. Moreover, Petitioner submits that this Hon’ble Court respectfully should not rely on mere recommendations from TRAI.

It is submitted that rather than simply ignoring applicable laws, other countries have sought to change their existing licensing regime. For example, by suggesting that India should not be one of those countries having a licensing scheme for Internet Telephony such as “Korea, Singapore, Hong Kong, Philippines, Thailand, Ecuador, and Mexico”, Microsoft suggested a different approach: “Microsoft respectfully requests that the TRAI propose a regulatory approach wherein PC to PC VoIP requires no license (and is permitted to be transmitted by ISPs over their networks, public or managed, without restriction), and that only two-way PC to PSTN calling (both inside and outside of India) requires a light-touch registration or minimal licensing obligation, accompanied by appropriate regulations deemed necessary to protect consumers or address a market failure.” Response To Telecom Regulatory Authority of India Consultation Paper, Microsoft Corporation India Private Limited, page 14 (September 2016) (Publicly available at https://www.trai.gov.in/sites/default/files/201609060217157734124Microsoft_Corporation_India_Private_Limited.pdf).

Reliance JIO, suggested: “The unrestricted Internet Telephony by the ISPs/ 0TTs may be allowed only if they migrate to the Unified License with Access services authorization or they offer this service under a commercial arrangement with an existing Access service provider.” Comments of Reliance Jio lnfocomm Limited on the issues raised in the Consultation Paper on Internet Telephony (VOIP) (Consultation Paper No 13/2016 dated 22.06.2016), 5 September 2016, at page 9 (Publicly available at https://www.trai.gov.in/sites/default/files/201609060234264610172RJIO.pdf). Further, Reliance JIO suggested that “[i]t should be the responsibility of the Access Service Provider offering Internet telephony in collaboration with the OTT provider or otherwise to ensure that the international internet telephony calls are terminated in India through a licensed ILDO.” Id. at 13 [emphasis added].

Respondent No. 3’s current business partner, Reliance Jio, realized early on that a special “Facebook exception” was in its best interests. See “Stop illegal routing of internet telephony calls: COAI”, Economic Times (5 May 2016) (“The Cellular Operators Association of India (COAI) has urged the telecom department (DoT) to stop illegal routing of internet telephony calls, warning that a failure to do so would lead to a breach in telco licence conditions, pose security risks and cause sizeable losses to the national exchequer. Newcomer Reliance Jio Infocomm is also a COAI member, but the GSM industry body in its letter said Jio held a divergent view on the matter.”) [emphasis added] (Publicly available at https://economictimes.indiatimes.com/tech/internet/stop-illegal-routing-of-internet-telephony-calls-coai/articleshow/52133359.cms).

Respondent No. 4 claims it is a “mere application provider” rather than Petitioner who is an “access provider”. The submitted statement ignores Petitioner is most certainly both and to provide its Internet Telephony/VoIP services in India, Petitioner has fully complied with the existing applicable licensing regime for such services.

Respondent No. 4 also submits that “the relevant regulatory authorities are seized of the issue and the consultation process is ongoing”. The Respondent No. 4 is misleading this Hon’ble Court wherein the reality is that the regulators have already spoken, and they will not do anything further to enforce the law as currently written. TRAI rather recommends that going forward “Market forces” should dictate a solution.

Contrary to what is submitted by Respondent No. 4, there is no need for the creation of a new regime applying to “OTT services” and Petitioner is certainly not requesting the creation of such a new regulatory regime – especially given one is not needed. The Petitioner through this writ Petition is only praying before this Hon’ble Court to enforce the Law/Regulations currently in place.

Respectfully, TRAI has long had an agenda to grow the Internet user base in India. In 2010, TRAI recognized that the uptick in Internet users was below what was sought by it. See Recommendations on Spectrum Management and Licensing Framework, para 2.105 at page 104 (11 May 2010) (“Despite a token licence fee for ISP, the number of internet subscribers has grown from 5.14 million in September 2004 to only 15.24 million by the end of December 2009. Of this, the number of broadband subscribers is 7.83 million. These numbers are way below the target of 40 million and 20 million by the end of 2008 for internet and broadband subscribers respectively.”) (Publicly available at https://trai.gov.in/sites/default/files/FINALRECOMENDATIONS.pdf). To increase the number of Internet users in India, sometime after 2015, TRAI began tilting the scales in favor of OTTs and simply disregarded the current licensing regime when making recommendations. These efforts have been very successful as shown by the hundreds of millions of customers Respondents No. 3 and No. 4 have accumulated since 2015.

Without referencing the applicable laws and regulations, TRAI recently concluded: “It is not an opportune moment to recommend a comprehensive regulatory framework for various aspects of services referred to as OTT services, beyond the extant laws and regulations prescribed presently. It may be looked into afresh when more clarity emerges in international jurisdictions particularly the study undertaken by ITU.” TRAI Press Release Regarding Recommendations on “Regulatory Framework for Over-the-top (OTT) communication services” (14 September 2020) [emphasis added] (Publicly available at https://trai.gov.in/sites/default/files/PR_No.69of2020.pdf). See also TRAI Recommendations on Regulatory Framework for Over-The-Top (OTT) Communication Services, para 2.4(iii) at page 8 (“Since, ITU deliberations are also at study level, therefore conclusions may not be drawn regarding the regulatory framework of OTT services. However, in future, a framework may emerge regarding cooperation between OTT providers and telecom operators. The Department of Telecommunications (DoT) and Telecom Regulatory Authority of India (TRAI) are also actively participating in the ongoing deliberations in ITU on this issue. Based on the outcome of ITU deliberations DoT and TRAI may take appropriate consultations in future.”) [emphasis added] (Publicly available at https://trai.gov.in/sites/default/files/Recommendation_14092020_0.pdf).

The international ITU body, however, previously made it clear that it is not involving itself in India’s internal regulatory matters and is merely a spectator to such activities. See ITU Economic Impact of OTTs Technical Report 2017, 5.2 India at 33 (“India is in the process of reassessing its rules on online services, including OTT services. . . . As noted in Section 4.2, voice and messaging services are permitted to be offered only by firms that hold a licence. Internet Protocol (IP) based voice and messaging services can also be offered by licensed network operators as unrestricted Internet Telephony Services; however, these services may not interconnect with traditional switched services. The dichotomy between regulated traditional services and largely unregulated OTT services leads to numerous anomalies.”) [emphasis added] (Publicly available at https://www.itu.int/dms_pub/itu-t/opb/tut/T-TUT-ECOPO-2017-PDF-E.pdf).

As for the local ITU branch – the ITU-APT Foundation of India, that group has already sided with Respondent No. 4’s claim there is an “intelligible differentia” between its Internet Telephony services and Petitioner’s Internet Telephony services. ITU-APT Foundation of India comments on TRAI OTT consultation (7 January 2019) at 3 (“The Consultation Paper (“CP”) draws parallels between the communication services offered by OTT service providers and TSPs. However, we would like to submit that the services offered by them are widely different and cannot be compared.”) [emphasis added] (Publicly available at https://trai.gov.in/sites/default/files/ITUAPT08012019.pdf).

This position is not surprising given that according to the ITU-APT Foundation of India: “Facebook’s, [sic] one of our valued corporate member[sic] announce a major investment in Reliance Jio that would facilitate the ailing telecom Industry. The two companies said that they will work together on some major initiatives that would open up commerce opportunities for people across India.” ITU-APT Weekly News Summary [emphasis added] (Publicly available at https://itu-apt.org/itu-letter.pdf).

Rather than rely on ITU, TRAI should have considered more the deliberations of the Confederation of Indian Industry (CII) – which recognizes that OTT providers are already governed by the present licensing regime. See CII Response to TRAI Consultation Paper on Regulatory Framework for Over-The-Top (OTT) Communication Services at 6 (7 January 2019) (“Any new regulations for TSPs and OTTs should be considered taking into account the respective regulations govern the TSPs and the OTTs under the Telegraph Act, license, TRAI Act and the Information Technology Act. The Authority should consider new future fit frameworks that lightens the regulatory burden and adopts a progressive approach that allows all entities in the eco-system to proliferate and grow – offering maximum benefits to the consumers.”) [emphasis added] (Publicly available at https://trai.gov.in/sites/default/files/ConfederationofIndianIndustry08012019.pdf). CII has long been a major force in advocating what is in the best interest of Indian businesses – and does not care about the interests of US-based monopolies: “The journey began in 1895 when 5 engineering firms, all members of the Bengal Chamber of Commerce and Industry, joined hands to form the Engineering and Iron Trades Association (EITA). . . . Since 1992, through rapid expansion and consolidation, CII has grown to be the most visible business association in India.” [emphasis added] (Publicly available at https://www.cii.in/about_us_History.aspx?enc=ns9fJzmNKJnsoQCyKqUmaQ==).

It is submitted that a comprehensive licensing regime is already in place which covers not only the interception rules, penalties, security issues but also governs the license fees and tariffs and mode to operate among others. It is submitted that the stand of Respondent No. 4 in regards to interception rules and end-to- end encryption claimed to be covered under the IT Act and other rules, which it publicly opposes, is just like crumbs from a pie wherein the Indian Wireless Telegraphy Act, 1933; the Indian Telegraph Act, 1885; the Information Technology Act, 2000; and the Telecom Regulatory Authority of India Act, 1997 provide a complete pie and once it is brought under such laws Respondent No. 4 will have to comply with all the rules and regulations at par with the Petitioner.

Petitioner and Respondent No. 4 are indeed “equals” in that they provide the same Internet Telephony/VoIP service while are treated “unequally” by Respondents No. 1 and No. 2. It is submitted that only the Petitioner is required to comply with the licensing regime applicable for providing such telephony services.

Individual citizens forming a legal entity or juristic person can invoke fundamental rights. It is submitted that the ameliorative relief sought by the Petitioner is issuance of writ by this Hon’ble Court that the applicable laws and regulations are complied with and enforced upon the unregulated/unlicensed Internet Telephony/VoIP Service Provider Respondent No. 4 herein.

It is denied that the issues raised by this Petition are being “considered and decided by DoT and TRAI, the regulatory authorities with the expertise and experience to address such issues.” It has been over five years since the issue of an uneven level playing field was raised with Respondent No. 2 as regards Respondent No. 4.

Petitioner through this writ Petition is praying that the existing laws and regulations are fairly applied and enforced as to all companies no matter how large and powerful they are. It is humbly submitted that if the unlawful conduct uncovered by this writ Petition is not addressed by this Hon’ble Court, Respondent No. 4 will likely forever be left unchecked to do what it likes in India.

It is submitted that on 19 November 2019, the Minister of Home Affairs was asked “whether the Government does Tapping of WhatsApp calls and Messages in the country” and responded without answering the question but implied it was “tapping of WhatsApp calls and messages” by referencing the same interception rule mentioned by Respondent No. 4 in its submission. “Government Of India, Ministry Of Home Affairs, Lok Sabha, Unstarred Question No: 351” (Publicly available at http://loksabhaph.nic.in/Questions/QResult15.aspx?qref=6696&lsno=17). The Hon’ble Court has no way of knowing if Respondent No. 4 is helping law enforcement, exactly how Respondent No. 4 is helping law enforcement, or whether Respondent No. 4 could do more to help.

Whether or not Respondent No. 4 is consistent with its public pronouncements and does not actually access user accounts is actually of little importance – than that the Respondent No. 4 admittedly does not comply with the licensing requirements applicable to providers of Internet Telephony/VoIP services.

It is denied that there is no financial loss to the national exchequer despite the complete failure to obtain any entry fee, payment of license fee, or goods and service tax from India’s largest operator of Internet Telephony services. A loss of income naturally results when licensing fees are not paid. See Cellular Operators Association of India (COAI) Counter Comments TRAI Consultation Paper on Internet Telephony Released, 22 July 2016, at 1 (“Internet Telephony provided by unlicensed entities besides being in violation of license will not only deprive the licensed operators of huge revenue but will also result in lesser payout to exchequer in the form of reduced license fee on revenues.”) [emphasis added] (Publicly available at https://www.trai.gov.in/sites/default/files/201609161151061091227COAI.pdf).

It is denied that Respondent No. 4’s unregulated conduct actually “generates more revenue for the government by enhancing investments in data networks, and consequent increases in license fees.” [emphasis added]. Even the ITU-APT Foundation of India acknowledges that the infrastructure growth created by OTT providers happens in the USA and not in India. See ITU-APT Foundation of India comments on TRAI OTT consultation (7 January 2019) at 5 (“It is estimated that OTT investments in infrastructure is fast growing, and the bigger OTT players invested 9% of their 2011-2013 revenues in networks and facilities in the US. This trend can be replicated in India with the right regulatory environment which would recognize and incentivize greater investments rather than stifle the industry with arbitrarily applicable licenses.”) [emphasis added] (Publicly available at https://trai.gov.in/sites/default/files/ITUAPT08012019.pdf). Both the ITU-APT Foundation of India and Respondent No. 4 are wrong, however, given that Respondent No. 2’s failure to enforce existing laws has already created the “right regulatory environment” for the bigger OTT players. It is also clear neither Respondent No. 3 nor Respondent No. 4 have any intentions of building networks or facilities in India given they have withdrawn their prior physical presence in India and currently neither even have any office in India.

It is submitted that the question is not whether a licensing regime should apply to OTT’s when the existing regime already does apply, but the real question is whether the existing laws and regulations will be regulated and enforced by Respondents No. 1 and No. 2.

It is submitted that the contents of this Petition seeks liberty of the Court to enforce the laws as written. It is denied that the Petitioner is seeking from the Hon’ble Court to “displace” regulatory authorities but only to enforce existing law and regulations which are applicable to all providers of Internet Telephony/VoIP services, even those who claim to ride on the telecommunications rails built and maintained by other companies.

It is denied that the Respondent No. 4 was singled out in the writ Petition. Unlike Respondent No. 4, other similar service provider like “Skype” have near zero market share compared to Respondents No. 3 and 4. It is submitted that Skype was once the undisputed dominant provider in India but after its corporate parent Microsoft was sued in 2014 by Petitioner, Skype removed the ability to call within India from Skype to mobiles and landlines. In the relevant case, the Hon’ble Court in the United States found that Petitioner was better served filing a writ petition in India rather than in the United States. TI Investment Services, LLC, World Phone World Phone Internet Service Pvt. Ltd. v. Microsoft Corp., 23 F. Supp. 3d 451, 472 (D. N.J. 2014) (“The Courts of India are better positioned to determine whether their own national laws have been violated, and, if so, what the antitrust consequences, if any, are in their national market. If Plaintiffs wish to renew their suit, they should do so in the jurisdiction where they are alleged to have competed with Defendant, to have complied with regulatory laws, and to have suffered injury, and that is India.”).

It is further submitted that unlike Microsoft and even Google, Respondent No. 4 flagrantly violates existing regulatory prohibitions by, for example, allowing Indian users of its free “WhatsApp Business” utilize their landline phone numbers for messaging with customers. See WhatsApp Business App Android Download Page (“You can use WhatsApp Business with a landline (or fixed) phone number and your customers can message you on that number.”) (Publicly available at https://play.google.com/store/apps/details?id=com.whatsapp.w4b&hl=en_IN&gl=IN). As recognized even by TRAI, such unlicensed services run afoul of the existing licensing regime. See Consultation Paper on Regulatory Framework for Over-the-top (OTT) services, para 2.40 at page 28 (27 March 2015) (“Under the current telecom licensing regime, voice and messaging services can be offered only after obtaining a license. Apart from traditional voice and messaging, IP based voice and messaging services can also be offered by TSPs as unrestricted Internet Telephony Services, which are permitted under the scope of the Unified Access Service (UAS) license in terms of the UAS Guidelines dated 14th December 2005. Similar provisions exist for Cellular Mobile Telephone Service (CMTS) and Basic Service Licences. However, the scope of the Internet Services Licence was restricted to Internet Telephony Services without connectivity to Public Switch Telephone Network (PSTN)/Public Land Mobile Network (PLMN) in India.”) [emphasis added] (Publicly available at https://trai.gov.in/sites/default/files/OTT-CP-27032015.pdf).

It is denied that Respondent No. 4 can freely provide telecommunication services and ignore the Unified License Agreement because it relies on networks built by other companies. It is submitted that Respondent No. 4 at one point was building out its physical presence in India for regulatory reasons. By way of background, on 6 April 2018, the Reserve Bank of India issued its Directive, Storage of Payment System Data, requiring that: “All system providers shall ensure that the entire data relating to payment systems operated by them are stored in a system only in India.” Directive on Storage of Payment System Data, 6 April 2018, (Publicly available at https://www.rbi.org.in/scripts/NotificationUser.aspx?Id=11244&Mode=0).

Soon thereafter Respondent No. 4 announced the appointment of Abhijit Bose as head of “WhatsApp India”– WhatsApp’s first full country team outside of California . . . based in Gurgaon.” Respondent No. 4’s company statement is no longer available on its website but press accounts of this statement can still be found online. “WhatsApp appoints Abhijit Bose as head of WhatsApp India”, The Economic Times of India (21 Nov 2018) (Publicly available at https://economictimes.indiatimes.com/tech/internet/whatsapp-appoints-abhijit-bose-as-head-of-whatsapp-india/articleshow/66735848.cms). According to Mr. Bose’s November 2018 statement recounted by the India Times: “WhatsApp can positively impact the lives of hundreds of millions of Indians, allowing them to actively engage and benefit from the new digital economy.” Id. The India Times also reported in that article: “Apart from the traceability request, the government had had asked WhatsApp to set up a local corporate presence. . . .” Id. After finding a way to maneuver around the Reserve Bank of India’s 2018 Directive, on 6 November 2020, Respondent No. 4 announced the launch of its payment platform without having any “local corporate presence” that would store “data related to payments”. See “Send Payments in India with WhatsApp”, WhatsApp Blog (6 November 2020) (Publicly available at https://blog.whatsapp.com/send-payments-in-india-with-whatsapp). As with Respondent No. 3’s massive build out of its physical presence in India, Respondent No. 4’s “company statement” regarding the building of “WhatsApp India’s” physical presence in India is no longer found on Respondent No. 4’s website.

More importantly, as also with Respondent No. 3, Respondent No. 4 now no longer has any physical presence in India – despite the country being Respondent No. 4’s largest country market. And, without Respondent No. 4 having any physical presence in the country, Mr. Bose – still apparently head of “WhatsApp India”, announced in July 2020: “Our collective aim over the next two to three years should be to help low-wage workers and the unorganised, informal economy easily accesses three products – insurance, micro-credit and pensions.” See “Facebook’s WhatsApp to partner with more Indian banks in financial inclusion push”, Reuters Article, (22 July 2020) (Publicly available at https://www.reuters.com/article/us-whatsapp-india-idUSKCN24N24E. It is further submitted that Respondent No. 4 – who already dominants in Internet Telephony, messaging, and mobile payments plans on dominating in providing access to “insurance, micro-credit and pensions”. It is submitted that this blatant form of digital colonialism should respectfully be rejected by way of this present writ Petition

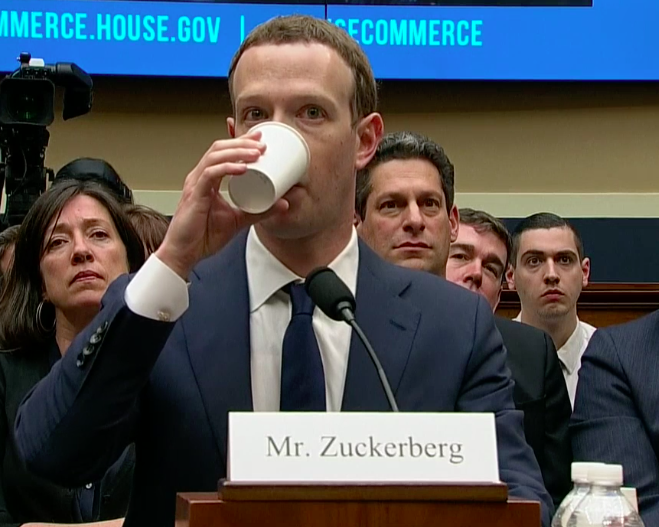

Respondent No. 4 submits it need not comply with the Unified License Agreement despite providing “telecommunication services” simply because it uses for free the networks built by others. The relevant regulatory authorities have been made aware of the matters set forth in the Petition for over five years without enforcing public laws and their own regulations and is why DoT is named as Respondent No. 2 in this matter. Last year alone, Respondent No. 3 generated revenues of more than US$85 billion and profits of more than US$29 billion. These numbers will grow exponentially as the “free” unlicensed products currently offered to Indians become further monetized by Respondents No. 3 and No. 4.

Other than the present writ Petition, there is no available “statutory remedy” that would otherwise cause the enforcement of applicable law. It is respectfully submitted that the Hon’ble Court should intercede to ensure equal protection under the law. It is further humbly submitted that if the Hon’ble Court does not intercede to stop the digital colonialism of Respondents No. 3 and No. 4, the same will go forward unabated. Considering the foregoing facts and circumstances, it is therefore respectfully prayed to this Hon’ble Court to kindly allow the prayer of relief sought by the Petitioner, in the interest of justice, including enjoining Respondent No. 4 from providing Internet Telephony/VoIP services until such time as Respondent No. 4 is in full compliance with the applicable requirements for providing such services in the Union of India.